Standard “Comfort” AC: Designed for people. SHR: 0.65-0.75. Spends 25-35% of capacity on humidity removal.

Critical Infrastructure & Server Cooling

The Physics Problem: Comfort Cooling vs. Process Cooling

The Hidden Danger: Over-Dehumidification

Why Your Server Room AC Fails Every Winter

Cold-Weather AC Failure Sequence:

Low-Ambient Solutions:

Baytown's Shoulder Season Trap:

Eliminating Single Points of Failure

The Math of Downtime

- Server hardware at risk: $30,000-100,000

- Data recovery costs: $10,000-50,000+

- Business interruption: 25 employees × $50/hour × downtime hours

- A weekend failure easily exceeds $100,000 total impact

N+1 Redundancy

Enough capacity for full load (N), plus one additional unit (+1). If any unit fails, remaining capacity handles full load while repairs are made.

Lead/Lag Control Logic

- Lead/Lag Rotation: Units swap roles automatically (typically every 24 hours) for equal runtime

- Automatic Failover: Temperature rise triggers lag unit instantly; alert sent to responsible parties

- Manual Override: Maintenance on one unit while other carries load—no cooling interruption

Example: 6-Ton Server Room

The Goldilocks Zone: Not Too Wet, Not Too Dry

Hot Aisle, Cold Aisle: Directing Cooling Where It Matters

Benefits of Proper Airflow:

We Consult on Rack Placement:

Keeping Dust Out of Your Data

MERV 8

MERV 11-13

HEPA (MERV 17+)

Baytown Industrial Air Quality:

Knowing Before It's Too Late

Monitoring Fundamentals:

Response Time Matters:

Asked Questions

Can I just use a mini-split for my server closet?

Not recommended for anything mission-critical. Mini-splits are comfort cooling with low SHR, no low-ambient controls, and zero redundancy. For critical data, purpose-built server room cooling is appropriate.

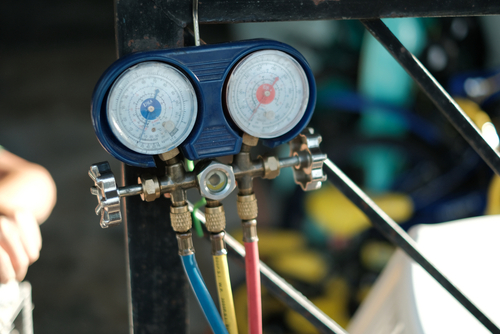

What's the difference between CRAC and standard AC?

CRAC (Computer Room Air Conditioner) units feature high SHR, precise humidity control, low-ambient capability, and better filtration. Standard AC is for people comfort—different application, different equipment.

How do I size server room cooling?

Based on actual heat load (total watts from equipment), not square footage. We calculate from nameplates, UPS sizing, and power data—not “tons per square foot” guesswork.

What happens if cooling fails?

Temperature rises based on heat load density. Dense installations reach damaging temps (>90°F) in 10-15 minutes. Equipment thermal-throttles, then shuts down. If temps exceed limits before shutdown, permanent damage occurs.

Why do I need cooling in winter?

Servers generate heat regardless of outdoor temperature. Standard AC can’t run below 55-65°F. Low-ambient controls extend operating range for year-round cooling.

Can you add redundancy to existing single-unit installation?

Usually yes. We can add a second unit with lead/lag control for N+1 redundancy. Requires electrical capacity assessment and physical space consideration.